AI video makes it easy to create polished content quickly. But for professional services firms, the question isn’t whether it’s possible. It’s whether it helps, or quietly undermines trust.

In most cases, the answer depends less on the technology and more on how it’s used.

There’s a moment some people experience watching an AI-generated video of a real person where something feels slightly off. The mouth moves correctly. The words make sense. The lighting is plausible. And yet.

They can’t name it. They just feel it.

That feeling has a name and it has implications when considering Ai video for professional services firms as a cost-cutting measure in marketing.

The Uncanny Valley

In 1970, Japanese roboticist Masahiro Mori observed that as a representation of a human being becomes more realistic, audience response becomes more positive… up to a point. Just before the representation reaches full realism, something shifts. The near-perfect triggers unease rather than connection. Mori called this drop in the response curve “the uncanny valley”.

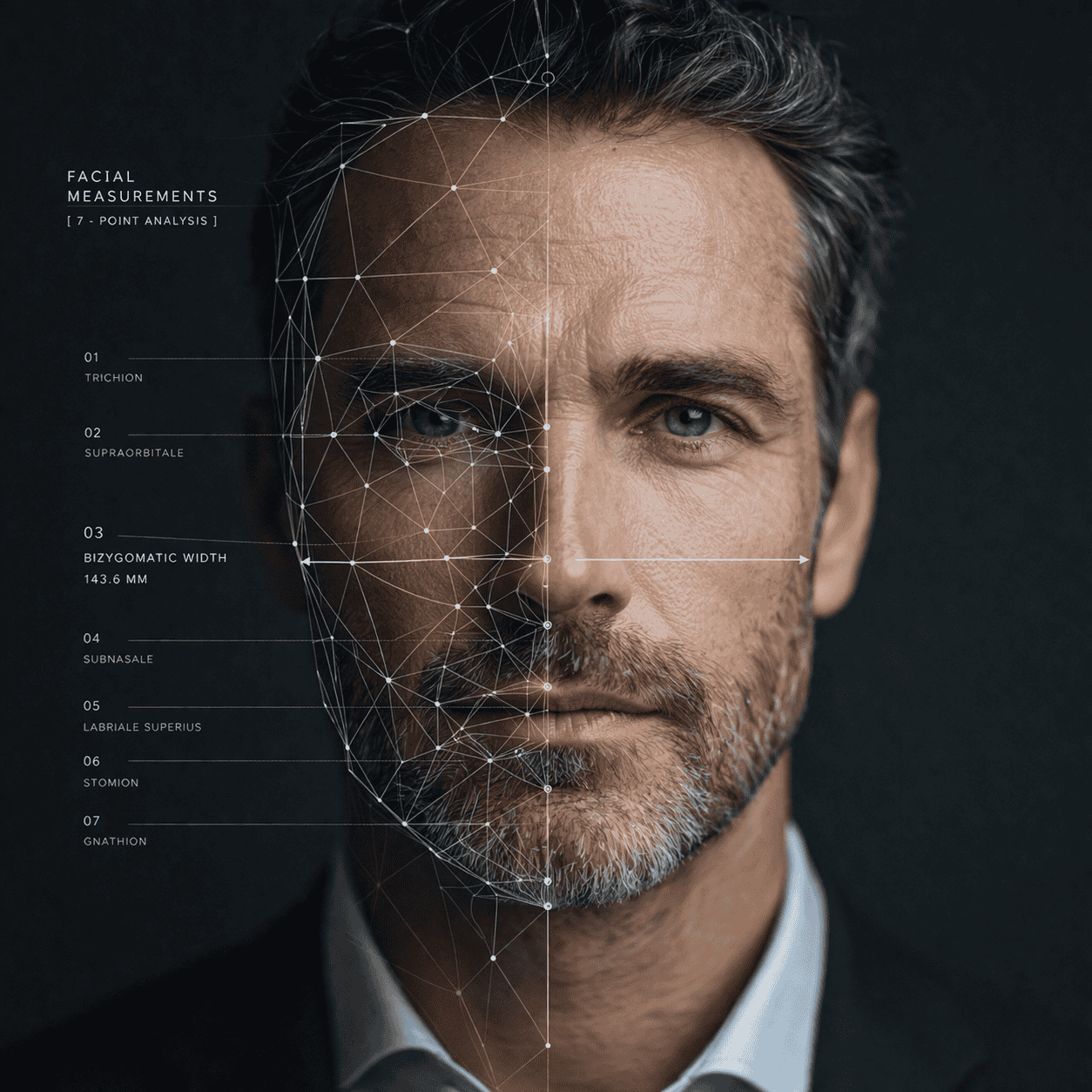

The phenomenon has been studied extensively since. What drives it appears to be a mismatch between visual signals: The face looks human but behavioral signals like micro-expressions, eye movement timing, and vocal cadence, are subtly wrong. The brain detects the inconsistency before the conscious mind does. The result is instinctive discomfort that the viewer often can’t articulate.

AI video in 2026 is improving rapidly. It is also, for most applications involving real people, squarely in the uncanny valley.

The HAL Problem

The visual uncanny valley is only part of the story. HAL 9000 — the AI in Kubrick’s 2001 — had no face at all. Just a red light and a calm voice. And yet HAL remains one of the most unsettling presences in cinema history. Not because of how it looked. Because of the mismatch between how it sounded and what it was doing.

That’s a behavioral uncanny valley. And it may be more relevant to AI video than the visual one.

When we watch another person speak, we’re reading far more than words and facial expressions. We’re tracking micro-timing: the fraction-of-a-second delay between a thought forming and an expression registering… Blink rate… The way eyes move before the mouth does… All the subtle responsiveness that signals a conscious being is present behind the face.

AI video can reproduce the visual surface with increasing accuracy. What it cannot yet reliably reproduce is behavioral authenticity where a thousand small signals tell a human observer, below the level of conscious thought, that someone is genuinely there.

The viewer watching an AI-generated video of a wealth manager may not identify it as artificial. They may simply feel, somewhere beneath articulation, that something is slightly HAL. That the calm, professional presentation doesn’t quite track with the presence behind it.

They’ll move on without knowing why.

Why This Matters for Some Industries More Than Others

For a consumer brand selling a product, AI video may be a reasonable production shortcut. The viewer’s relationship with the brand doesn’t depend on trusting a specific human being. The stakes of a subtle “wrongness” are low.

For a wealth manager, a law firm partner, or a financial advisor, the calculus is entirely different.

In professional services, the person is the product. A prospective client evaluating a wealth management firm isn’t just assessing the investment strategy, they’re deciding whether they trust the human being who will be managing their money. That decision is made partly on rational grounds and partly on instinctive ones. How does this person present themselves? Do they feel trustworthy? Is something off?

Those instinctive assessments happen fast… research suggests within the first few seconds of exposure. And they’re surprisingly hard to override with rational argument once made.

An AI-generated video that triggers even a mild uncanny valley response in a prospective client may cost more than it saves. Not because the client identifies it as AI. Because they feel something they can’t name, and they move on.

The Cost Reduction Logic Has a Flaw

The pitch for AI video for professional services usually goes: you record the subject once, and AI can generate unlimited variations – different scripts, different contexts, different channels – without bringing the subject back in front of a camera.

The cost reduction is real. The assumption embedded in the logic is that the AI-generated versions will perform equivalently to original footage. That assumption hasn’t been tested at scale for high-trust, high-stakes purchase decisions.

For a firm whose clients are making decisions about their retirement savings, their estate, or their company’s legal exposure, “performs equivalently” is a high bar. The margin for uncanny valley error is narrow.

Where the Line is

This isn’t an argument against AI in visual production. AI as a post-production tool applied to original captured content like motion effects, color grading, background replacement, subtle animation of still photography, is a different category entirely. The source material is real. The editing is transparent.

The question is whether the viewer is being asked to trust a human presence that was fabricated rather than captured. For professional services firms, where the entire value proposition rests on human judgment and personal trust, that’s a question worth asking before the production budget gets approved.

The firms that get this right won’t necessarily be the ones that avoid AI entirely. They’ll be the ones that understand where authenticity is load-bearing… and protect it there.

Simple Rules for Professional Services Firms

If the video reflects something real – real people, real environments, real presence – it can support credibility.

If it replaces reality with something constructed, optimized, or artificial, it weakens it.

AI video doesn’t fail because it’s new. It fails when it creates a version of someone that feels more polished than true.

In professional services, that gap matters.

Frequently Asked Questions

Only carefully. The question is not whether AI video can look polished. It is whether the result still feels accurate. In professional services, credibility depends on alignment between what clients see and who they actually meet.

Yes. Trust is shaped quickly, and visual content plays a role in that judgment. If AI video feels artificial, overly optimized, or disconnected from reality, it can create hesitation rather than confidence.

AI video is most appropriate when the stakes are lower and the goal is efficiency rather than personal credibility. Internal communications, recruitment support, simple explainers, or background content may be reasonable uses if the result does not misrepresent real people or environments.

Firms should avoid AI video in moments where trust, leadership presence, or personal credibility are central. That includes partner introductions, leadership messaging, client-facing brand videos, and any content where a polished but artificial version of reality would weaken confidence.